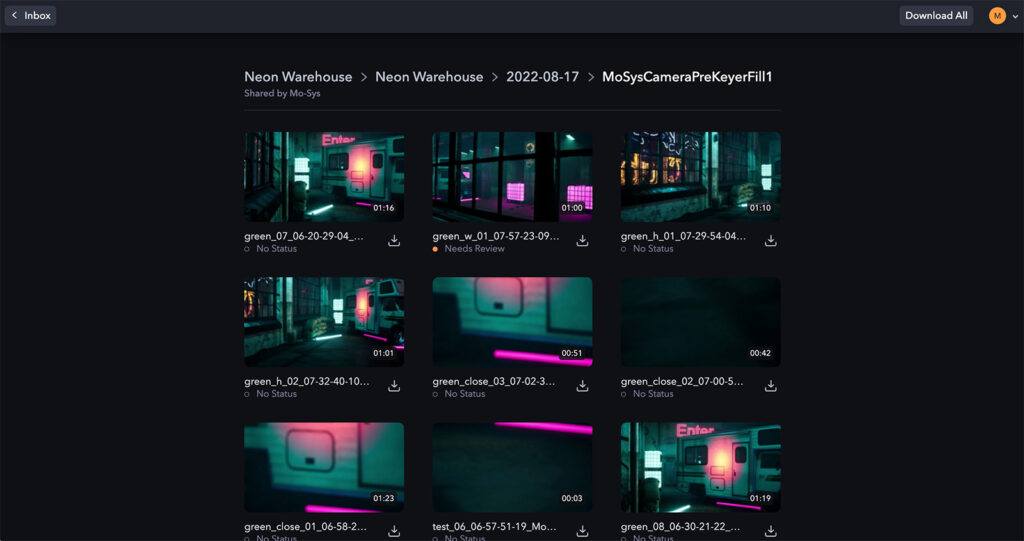

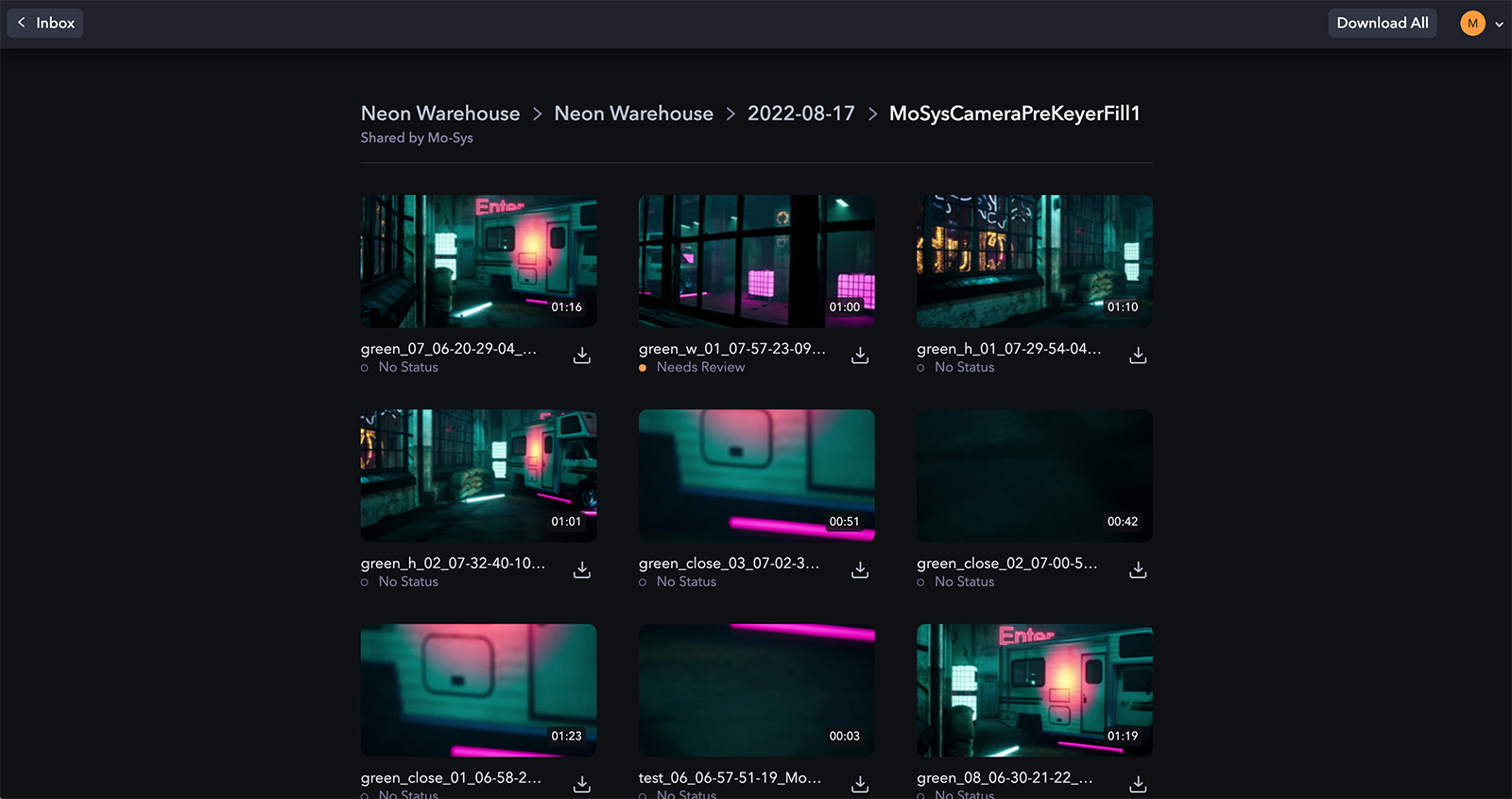

First unveiled at IBC 2022, Frame.io and Mo-Sys are partnering on a groundbreaking camera-to-cloud solution for virtual production. Here are the details:

Using Camera to Cloud technology with Mo-Sys’ NearTime cloud-based re-rendering service, productions can see their visual effects scenes come to life in Frame.io as they’re being shot on set. This integration will democratize the accessibility, quality, and speed of virtual production work beyond the big-budget productions few can afford. Mo-Sys NearTime leverages the power of a fully automated custom Unreal render farm and can deliver higher quality real-time VFX shots in the same real-time VFX delivery window.

Frame.io

This concept offers a lot of potential for modestly budgeted virtual productions. The rendering power needed to deliver real-time performance across a large LED volume such as the type used in The Mandalorian is enormous. Yet, camera tracking solutions like Mo-Sys, when combined with real-time compositing solutions such as Ultimatte and Zero Density, can deliver green screen shots almost as good as LED volumes.

But you still need a powerful rendering solution to make everything work in real-time. The new solution puts virtual production out of reach of smaller shows. The Frame/Mo-Sys integration promises to erase this barrier by combining live takes and camera tracking data with Unreal Engine rendering in the cloud. Everything comes together quickly in Frame.io for editor and creative review.

The result is real-time virtual production’s power and image quality with much less costly infrastructure. It promises to be a potential game-changer and a new level of democratization for virtual production. For more info, check out this link: https://blog.frame.io/2022/09/08/ibc-2022/

Leave a Reply